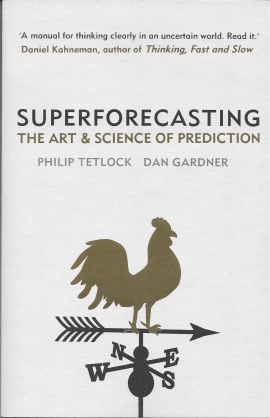

Superforecasting: The Art and Science of Prediction. Philip Tetlock and Dan Gardner. Crown; 352 pages; $8.14. Random House; £6.49.

This book is essential reading for all thinking people. I’m not going to write a new review here—I think the existing reviews do it justice—I just wanted to add my voice to the recommendations. You don’t already know what it says, it’s much too packed with insights for that, and you won’t be able to hear discussion of world events the same way after reading it. Also, it’s quite short.

Reviews

- Economist “the techniques and habits of mind set out in this book are a gift to anyone who has to think about what the future might bring. In other words, to everyone.”

- Bryan Caplan “if any book is worth reading cover to cover, it’s Superforecasting.”

- Kirkus reviews “A must-read field guide for the intellectually curious”

- Spectator Great last para, too long to quote here.

- Management Today “Superforecasting is a very good book. In fact it is essential reading—which I have never said in any of my previous MT reviews.”

- Website, Wikipedia

Buy it at

The general advice about forecasting by Nassim Taleb and others is not to do forecasting, but instead to arrange the payoff matrix in your favour and let randomness happen. They call this being lucky. However I’m intrigued by your recommendation and will rush out to buy the book.

Ah, I see. It’s not an account of people who make heroic predictions, it’s a concise guide on how to think properly. But now it got me thinking properly and when this happens I can’t sleep…

Very good read. From the perspective of the individual, although it’s very powerful it’s not so exciting to correctly assign 40/60 probabilities to a singular event. You care about the outcome, but either way it feels like gambling. So people either don’t improve, or they go into fields where they face sequences of forecasts of the same type and get good at that – such as speculation or playing poker.

The authors created a culture where being a forecaster is a thing, and you do forecasting a general way although the questions are all different. That’s quite the achievement. Still, you never know how good each of your predictions is, even after the fact. You only know your scores and you can internally scrutinise your discipline. Discipline is so crucial that if you’re invested in the outcome you’d be better off asking independent forecasters.

Like the authors, I’m wondering how big outcomes aggregate from granular, precise questions. In life as in quantum mechanics, those things happen that can happen in more ways. If one of the many attempts on Hitler succeeded, or Gorbachev didn’t get the job, or Lehman didn’t collapse, would history have turned out mostly similar if a little delayed and with different cast?

My hunch is yes. I think the uniqueness of historical events is illusory. The outcomes we’re observing must be the likely ones, so if a big event happens it must be because there were many paths leading to the same macro outcome. I wonder if the authors’ future research bears this out.

Very glad you read and liked it!